- Anywhere

RunPod AI

What is RunPod?

RunPod is a cloud platform that provides on-demand access to powerful GPU computing. Instead of buying expensive hardware, users rent computing power when they need it. This is useful for AI training, machine learning, rendering, and other compute-heavy tasks. The dev and coding tool serves developers, researchers, and hobbyists who need scalable computing resources. A startup training an AI model can use RunPod without investing in a server room. A researcher running complex simulations can spin up powerful instances for a few hours and then shut them down. An individual learning machine learning can access professional-grade GPUs without buying expensive equipment. RunPod aims to democratize access to high-performance computing. It makes powerful GPUs affordable and accessible to a wide range of users.

Specifications

| Category | Details |

| Primary Functions | Provides on-demand GPU Pods for AI training, fine-tuning, and custom workloads. |

| Key Technology |

|

| Integrations |

|

| Special Features | Zero Egress Fees |

| Pricing | Paid |

| Free Trial | Not Available |

Key Features

On-Demand GPU Access: Users rent computing power only when they need it. There is no long-term commitment or hardware purchase required.

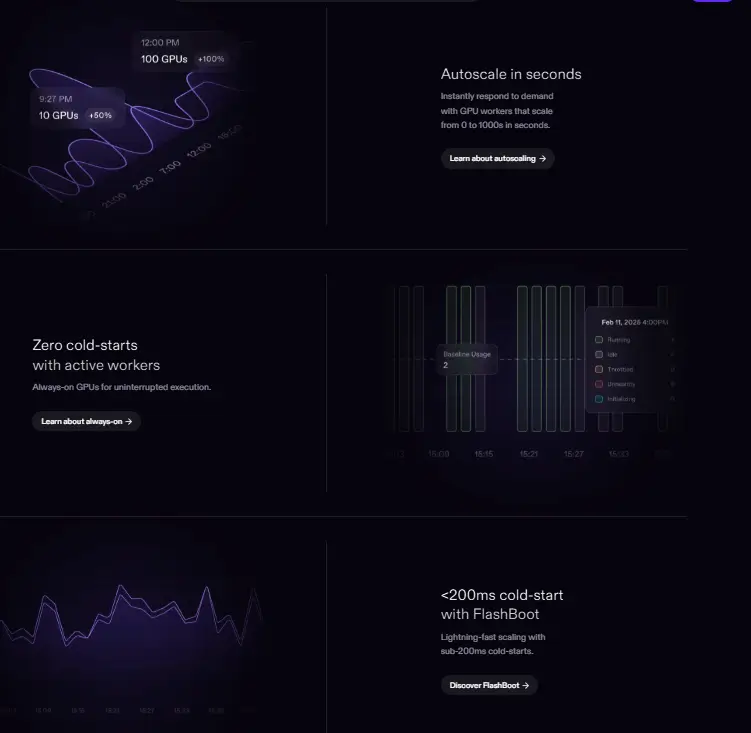

Scalable Infrastructure: Start with a single GPU instance. Scale up to clusters for larger workloads. Resources grow with project demands.

Pay-as-You-Go Pricing: Pay only for what you use. No upfront costs or idle hardware charges. This makes high-performance computing accessible to individuals and small teams.

Developer-Friendly Interface: Access resources through a web interface or API. Works with standard machine learning frameworks and development tools.

Flexible Workload Support: Suitable for AI model training, inference, rendering, batch processing, and other compute-intensive applications.

Democratized Access: Removes cost barriers to high-performance computing. Individuals and small organizations can access resources once reserved for large enterprises.

Pros

✅ No upfront hardware investment required

✅ Pay only for what you use

✅ Scales from small projects to large workloads

✅ Accessible to individuals, startups, and enterprises

✅ Democratizes high-performance computing

✅ Works with standard development tools

Cons

❌ Requires technical knowledge to set up and manage instances

❌ Pricing details not specified in source

❌ Best suited for users comfortable with cloud infrastructure

❌ Network latency may vary depending on region

Who is Using RunPod?

AI Researchers: They train models without investing in expensive hardware. They spin up powerful instances for experiments and shut them down when done.

Machine Learning Engineers: They deploy and scale models for inference. They use RunPod for production workloads without managing physical servers.

Developers: They test and run GPU-intensive applications. They access computing power on demand without waiting for hardware procurement.

Startups: They build AI products without large capital expenses. They scale computing resources as their user base grows.

Hobbyists and Students: They learn machine learning and AI on professional-grade hardware. They experiment without buying expensive equipment.

Creative Professionals: They use RunPod for 3D rendering, video processing, and other creative workloads that require powerful GPUs.

Pricing

Pay-as-You-Go: Users pay only for the computing resources they use. There are no long-term contracts or upfront hardware costs.

Custom Enterprise Plans: Organizations with large-scale needs can contact RunPod for custom pricing and dedicated resources.

What Makes RunPod Unique?

- No need for costly hardware. Get GPU power on demand.

- Pay only for what you use. No wasted cost.

- Easy access for students, startups, and small teams.

- Scales as your project grows. No platform switch needed.

- Works with common tools. No steep learning curve.

Rating

| Category | Rating (⭐/5) |

| Ease of Use | 4.4 /5 |

| Cost Efficiency | 4.8 /5 |

| Accuracy and Reliability | 4.2 /5 |

| Performance and Speed | 4.6 /5 |

| Functionality and Features | 4.5 /5 |

| Customization and Flexibility | 4.7 /5 |

| Data Privacy and Security | 4.3 /5 |

| Support and Resources | 4.2 /5 |

| Integration Capabilities | 3.9 /5 |

| Overall Score | 4.4 /5 |

Summary

RunPod delivers a genuinely cost-effective, flexible, and developer-friendly GPU cloud platform that competes meaningfully with AWS, Google Cloud, and Azure on AI compute workloads at every scale. The combination of zero egress fees, per-millisecond billing, FlashBoot serverless cold starts, and a Community Cloud marketplace creates pricing advantages that directly benefit teams building AI products on constrained budgets. Ideal for developers, researchers, startups, and ML teams who need affordable, configurable, and scalable GPU compute without the complexity and hidden costs of major cloud providers, RunPod represents one of the strongest options in the market today.

Disclaimer

The pricing and other information related to RunPod may not be up to date. We recommend users refer to the official website of RunPod for the most accurate specifications and current pricing details.

Related AI Tools

No related categories found.